As organizations increasingly adopt artificial intelligence (AI) to drive innovation and efficiency, they’re quickly realizing that flexibility and customization are no longer optional—they’re essential. That’s where the modular AI stack comes in. This architecture is quickly gaining popularity because it enables teams to create tailored AI solutions while reducing complexity and improving workflow efficiency.

What Makes a Modular AI Stack Different?

Traditional AI platforms often bundle everything—data processing, model training, deployment, and monitoring—into a single, monolithic system. While this can work for generic use cases, it’s rarely a good fit for businesses with specific requirements or existing infrastructure.

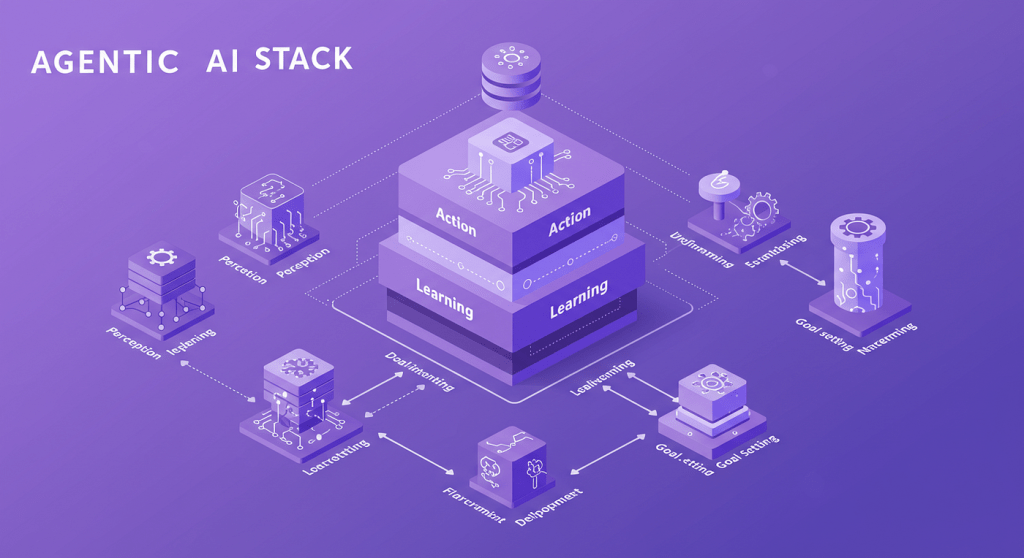

A modular AI stack, by contrast, splits the AI lifecycle into functional layers. These layers typically include:

- Data ingestion and processing

- Model training and optimization

- Inference and deployment

- Workflow orchestration

- Monitoring and governance

Each of these components can be developed, scaled, and maintained independently, making the system far more adaptable to change.

Building Custom AI Solutions with a Modular Approach

Every organization has different data types, compliance concerns, performance requirements, and technical capabilities. A modular AI stack makes it possible to design AI systems that are custom-built to serve those unique needs.

For instance, a telecom company may need a high-speed real-time inference engine for analyzing call data, while a pharmaceutical firm might require robust compliance modules and secure model auditing. Using a modular AI stack, each organization can integrate only the components they need, optimizing both cost and performance.

This targeted approach is not only more efficient but also leads to better model outcomes, as businesses can fine-tune each layer without affecting the entire system.

Simplifying Workflow Management and Scaling with Ease

AI workflows are notoriously complex. They involve large volumes of data, iterative model experimentation, frequent updates, and collaboration across teams. A modular AI stack brings structure and clarity to this chaos.

For example, when a data preprocessing step needs modification due to a new data format, only the data ingestion module needs updating. The rest of the pipeline—training, inference, and deployment—can remain untouched. This separation of concerns enables faster iteration, lower risk, and better collaboration between teams.

Additionally, because each module operates independently, the entire stack becomes easier to scale. As your AI workload increases, you can scale the relevant component—say, the inference engine—without needing to scale the entire system. This modular scalability keeps costs under control while ensuring peak performance.

Keeping Up with Rapid Technological Change

AI is evolving at breakneck speed. New models, tools, and frameworks emerge regularly, and staying competitive means being able to adopt these advancements quickly. Monolithic systems often struggle to integrate new tools without major rework.

With a modular AI stack, adopting the latest technologies becomes significantly easier. Want to move from a legacy model to a new transformer-based architecture? Just update the model training module. Need to deploy models to edge devices instead of cloud servers? Swap out your deployment module. This flexibility ensures your AI stack can grow and evolve alongside your business needs and the broader AI landscape.

Real-World Applications Across Industries

The modular AI stack isn’t just a theoretical improvement—it’s actively driving results in real-world applications across industries:

- Finance: Modular fraud detection and risk scoring tools that can be updated independently of the core banking systems.

- Healthcare: Secure, HIPAA-compliant AI models that include modular layers for data anonymization and explainability.

- Retail: Scalable recommendation engines where user behavior tracking, product ranking, and A/B testing are all modularized.

- Manufacturing: Predictive maintenance systems built from modular sensors, anomaly detection models, and real-time dashboards.

Each use case benefits from the same core principle: being able to customize and optimize AI systems without starting from scratch or making disruptive changes to the infrastructure.

Making AI Development More Accessible

Another underappreciated benefit of the modular AI stack is that it lowers the barrier to entry for AI development. By decoupling components, smaller teams or less technically mature organizations can adopt AI incrementally.

For example, a business could begin with just a pre-trained model and a deployment module, skipping model training altogether. As the organization matures, it can then add more layers such as custom training pipelines, monitoring tools, or orchestration platforms. This progressive adoption path makes AI far more accessible and sustainable in the long term.

Conclusion: Embrace the Flexibility of Modular AI

In a world where business needs and technology are both evolving rapidly, rigid AI systems simply don’t cut it anymore. A modular AI stack offers the flexibility, control, and efficiency modern organizations need to build impactful, scalable AI solutions.

By breaking down the AI system into manageable, interchangeable parts, businesses can streamline their workflows, reduce development time, and continuously improve their models without friction. Whether you’re a startup building your first AI product or an enterprise scaling across departments, the modular approach ensures you’re prepared for both today’s needs and tomorrow’s innovations.

Leave a comment