In recent years, large language models have become integral to numerous applications in artificial intelligence. As organizations and researchers continue to develop advanced models, the need for a systematic comparison of large language models has become increasingly vital. This article aims to explore the various aspects of these models, focusing on their architectures, performance metrics, and applications. By providing a thorough comparison of large language models, we can better understand their strengths and weaknesses.

Understanding Large Language Models

Large language models are a type of artificial intelligence that uses machine learning techniques to understand and generate human-like text. They are trained on vast amounts of data, enabling them to predict the next word in a sentence, answer questions, and perform various other language-related tasks. The development of these models has revolutionized the way we interact with technology, leading to advancements in natural language processing (NLP).

Architecture of Large Language Models

The architecture of large language models plays a crucial role in their performance. Most contemporary models are based on the transformer architecture, which employs self-attention mechanisms to process data more effectively. This architecture allows models to handle long-range dependencies within text, improving their contextual understanding.

- Transformers: The introduction of transformers marked a significant shift in NLP. Unlike traditional models, which processed data sequentially, transformers analyze the entire input simultaneously. This parallel processing capability greatly enhances efficiency and enables the model to learn complex patterns in language.

- Pre-training and Fine-tuning: Most large language models undergo a two-step training process. Initially, they are pre-trained on a diverse corpus of text, allowing them to learn the statistical properties of language. Subsequently, they are fine-tuned on specific tasks, which helps improve their performance in targeted applications. This method of training is crucial for creating adaptable models.

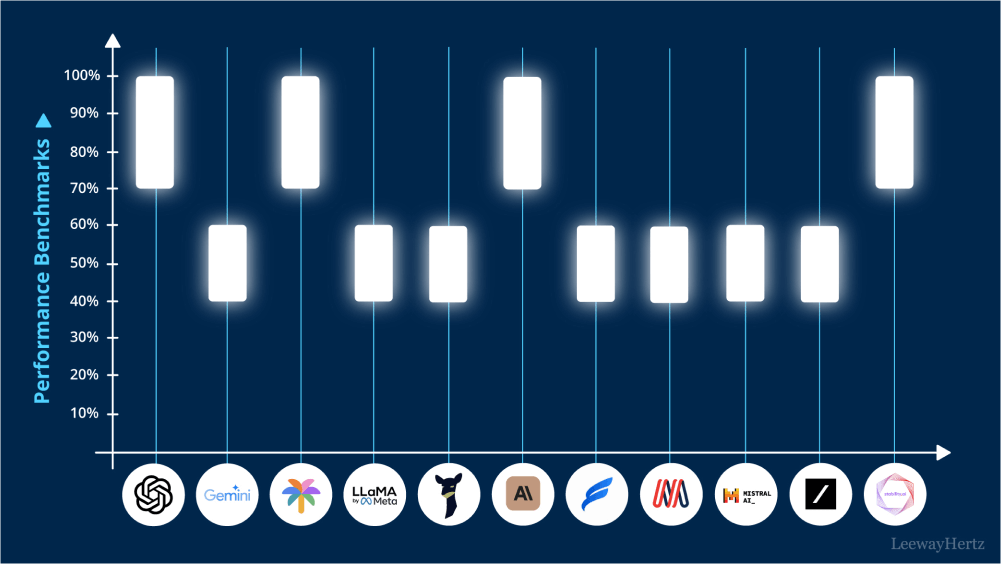

Performance Metrics in Comparing Large Language Models

When evaluating large language models, several performance metrics are commonly used. These metrics provide insights into a model’s effectiveness and efficiency in various tasks.

- Accuracy: This metric measures how often a model’s predictions are correct. In tasks like sentiment analysis or question answering, higher accuracy indicates better performance. Comparing the accuracy of large language models is essential for understanding their capabilities.

- F1 Score: The F1 score balances precision and recall, making it a valuable metric for assessing performance, especially in classification tasks. It is particularly useful when the data is imbalanced, ensuring that models do not favor one class over another.

- Computational Efficiency: In addition to accuracy and F1 scores, computational efficiency is crucial. This metric assesses how quickly a model can process information and produce results. Given the large sizes of these models, optimizing computational efficiency can significantly impact usability in real-world applications.

- Robustness: A robust model performs well across different datasets and conditions. Evaluating the robustness of large language models is essential for understanding their generalizability and reliability in various scenarios.

Applications of Large Language Models

The applications of large language models are vast and diverse. By comparing their capabilities, we can identify which models excel in specific tasks.

- Text Generation: One of the most popular applications of large language models is text generation. These models can create coherent and contextually relevant text based on given prompts. Comparing their performance in generating human-like text is critical for tasks like content creation and creative writing.

- Translation: Large language models have significantly advanced machine translation. By comparing their effectiveness in translating languages, we can identify which models offer the best accuracy and fluency in translated text.

- Conversational Agents: Large language models are often employed in chatbots and virtual assistants. Evaluating their ability to understand and respond to user queries is essential for improving user experience. A comparison of large language models in this area helps organizations choose the most suitable model for their applications.

- Sentiment Analysis: In business and marketing, sentiment analysis is crucial for understanding consumer opinions. Large language models can be evaluated based on their ability to accurately determine sentiment in text, providing insights into customer satisfaction.

Challenges in Comparing Large Language Models

While comparing large language models offers valuable insights, several challenges must be addressed.

- Data Bias: Large language models are trained on existing data, which may contain biases. This can lead to skewed results and unintended consequences in applications. Recognizing and mitigating bias is essential for developing fair and effective models.

- Interpretability: Understanding how large language models arrive at specific predictions can be challenging. Their complexity often makes it difficult to interpret results. Improving model interpretability is crucial for building trust and accountability in AI applications.

- Resource Intensity: Training and deploying large language models can require significant computational resources. This can limit access for smaller organizations and researchers. Finding ways to optimize resource usage is necessary for democratizing AI technologies.

Conclusion

In conclusion, the comparison of large language models reveals important insights into their architecture, performance metrics, and applications. Understanding the strengths and weaknesses of these models is vital for making informed decisions in AI implementation. As the field continues to evolve, ongoing research and comparison of large language models will play a crucial role in shaping the future of natural language processing and artificial intelligence. By addressing the challenges associated with these models, we can harness their potential while ensuring ethical and effective use in various domains.

Leave a comment