Artificial Intelligence (AI) is a transformative technology reshaping various industries. As AI systems become increasingly sophisticated, the importance of fine-tuning these systems cannot be overstated. One crucial aspect of this fine-tuning is hyperparameter tuning. This process significantly influences the performance of AI models, making it essential for achieving optimal results. In this article, we will explore the impact of hyperparameter tuning on AI and its importance in creating effective machine learning models.

What is Hyperparameter Tuning?

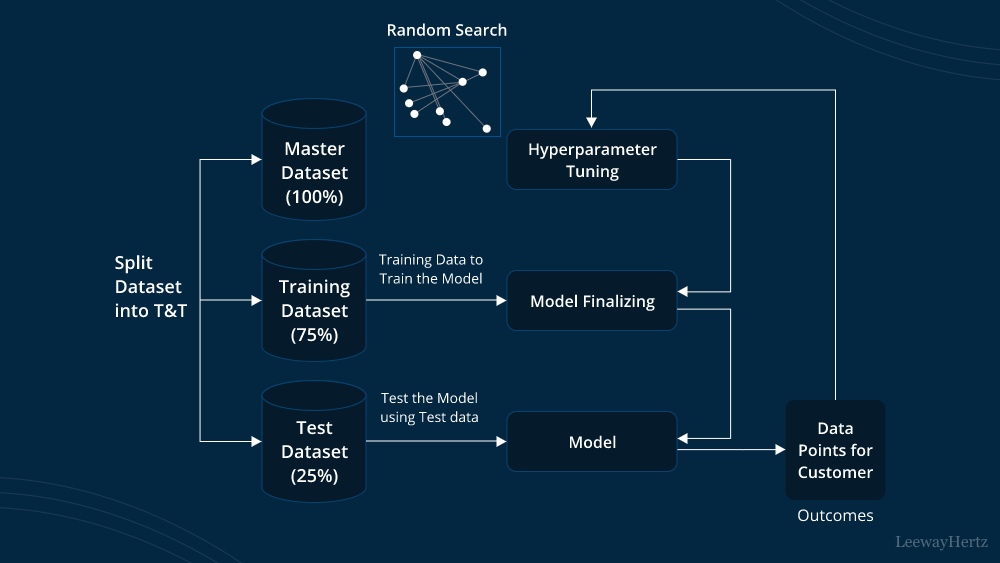

Hyperparameter tuning refers to the process of adjusting the settings or parameters of an AI model to improve its performance. These settings, known as hyperparameters, are not learned from the data but are set before the training process begins. Examples of hyperparameters include the learning rate, batch size, and the number of layers in a neural network. By carefully selecting these hyperparameters, data scientists and engineers can enhance the model’s ability to make accurate predictions.

Why Hyperparameter Tuning Matters

The impact of hyperparameter tuning on AI is profound. Hyperparameters control various aspects of the learning process and model architecture, which directly affect the performance and efficiency of the AI system. Without proper tuning, even the most sophisticated algorithms can underperform, leading to suboptimal results. Here are a few reasons why hyperparameter tuning is crucial:

- Improves Model Accuracy: Proper hyperparameter tuning helps in finding the best configuration for the model, leading to improved accuracy. For instance, selecting the right learning rate can make a significant difference in how well the model learns from the data.

- Enhances Generalization: Hyperparameter tuning can help in achieving better generalization, which means the model performs well not just on the training data but also on unseen data. This is essential for developing robust AI systems that can handle real-world scenarios effectively.

- Reduces Overfitting: Overfitting occurs when a model learns the training data too well, including its noise and outliers, which negatively affects its performance on new data. Through hyperparameter tuning, one can implement regularization techniques and other strategies to mitigate overfitting.

- Optimizes Computational Resources: Efficient tuning of hyperparameters can lead to better utilization of computational resources, reducing the time and cost associated with training models. For example, choosing an optimal batch size can impact the speed of training and resource consumption.

Techniques for Hyperparameter Tuning

Several techniques are used to tune hyperparameters effectively. Each method has its own advantages and is chosen based on the specific needs of the model and the problem at hand. Here are some common techniques:

- Grid Search: This technique involves specifying a range of values for each hyperparameter and evaluating the model performance for every possible combination. Although exhaustive, grid search can be computationally expensive.

- Random Search: Unlike grid search, random search involves randomly selecting combinations of hyperparameters to evaluate. While it may not be as thorough as grid search, it often finds good configurations faster and with less computational effort.

- Bayesian Optimization: This method uses probabilistic models to make informed decisions about which hyperparameters to try next. Bayesian optimization is more efficient than grid and random search, especially for complex models with many hyperparameters.

- Hyperband: Hyperband is an algorithm that combines random search with early stopping to allocate resources more effectively. It starts with many configurations and progressively focuses on the more promising ones, optimizing resource usage.

- Automated Machine Learning (AutoML): AutoML frameworks use advanced algorithms to automate the process of hyperparameter tuning. These tools can significantly simplify the tuning process, making it accessible to non-experts.

The Impact of Hyperparameter Tuning on AI Performance

The impact of hyperparameter tuning on AI is evident in various aspects of model performance. Proper tuning can lead to:

- Better Accuracy: Hyperparameter tuning helps in achieving a model configuration that maximizes accuracy on both training and validation data.

- Faster Training Times: Optimally tuned hyperparameters can speed up the training process by selecting efficient configurations, reducing the overall time required to train the model.

- Improved Robustness: Tuning enhances the robustness of the model, ensuring it can handle different types of data and maintain performance across various scenarios.

- Resource Efficiency: By finding the right balance of hyperparameters, models can be trained more efficiently, making better use of available computational resources and reducing costs.

Conclusion

In conclusion, the impact of hyperparameter tuning on AI is significant and multifaceted. It plays a critical role in improving model accuracy, generalization, and resource efficiency. By employing various tuning techniques, AI practitioners can optimize their models to achieve the best possible performance. As AI continues to evolve, the ability to effectively tune hyperparameters will remain a vital component in developing powerful and efficient AI systems. Understanding and leveraging hyperparameter tuning can be the key to unlocking the full potential of AI technologies.

Leave a comment