The rapid evolution of artificial intelligence (AI) has led to significant advancements in generative AI, a branch of AI focused on creating new content from learned patterns. This article provides a comprehensive overview of the generative AI tech stack, including key frameworks, infrastructure, models, and applications.

What is the Generative AI Tech Stack?

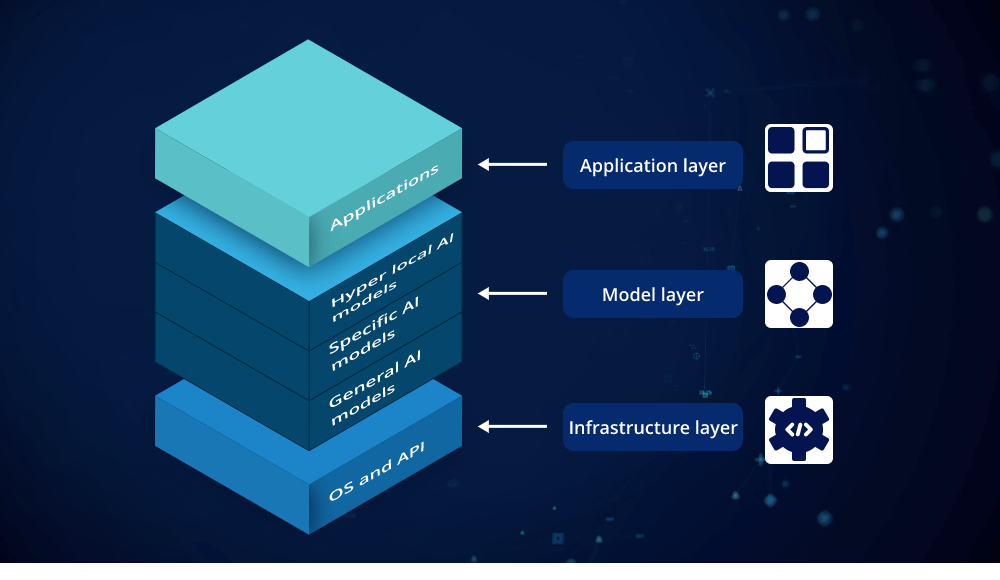

The generative AI tech stack is a collection of technologies and tools that work together to develop and deploy generative AI applications. This stack typically includes several layers: frameworks, infrastructure, models, and their practical applications. Each component plays a crucial role in ensuring that generative AI systems function effectively and efficiently.

Frameworks: The Building Blocks of Generative AI

Frameworks are the backbone of the generative AI tech stack. They provide the necessary tools and libraries for building, training, and deploying generative models. Popular frameworks include TensorFlow, PyTorch, and JAX.

- TensorFlow: Developed by Google, TensorFlow is widely used for developing machine learning and deep learning models. Its extensive library of pre-built functions and support for various neural network architectures make it a go-to choice for many developers.

- PyTorch: Created by Facebook’s AI Research lab, PyTorch is known for its flexibility and ease of use, especially in research environments. It supports dynamic computation graphs, which are particularly useful for developing complex generative models.

- JAX: An emerging framework from Google, JAX is designed for high-performance machine learning research. It offers automatic differentiation and high-speed numerical computing, making it ideal for experimenting with novel generative AI techniques.

Infrastructure: The Foundation for Generative AI

Infrastructure provides the computational resources and environment needed to run generative AI models. This layer includes hardware, cloud services, and distributed computing platforms.

- Hardware: Generative AI models, especially those based on deep learning, require substantial computational power. Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs) are commonly used to accelerate model training and inference. These specialized hardware components are essential for handling the complex computations involved in generative AI.

- Cloud Services: Platforms like Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure offer scalable cloud infrastructure tailored for AI applications. These services provide virtual machines, storage, and managed AI services, allowing developers to easily scale their generative AI projects.

- Distributed Computing: For large-scale generative models, distributed computing frameworks such as Apache Spark and Kubernetes can manage and coordinate tasks across multiple machines. This ensures that training and deployment processes are efficient and resilient.

Models: The Heart of Generative AI

Generative models are at the core of the generative AI tech stack. They are designed to create new content by learning from existing data. Key types of generative models include:

- Generative Adversarial Networks (GANs): GANs consist of two neural networks—a generator and a discriminator—that work in opposition. The generator creates new data, while the discriminator evaluates its authenticity. Through this adversarial process, GANs can produce highly realistic images, text, and other types of content.

- Variational Autoencoders (VAEs): VAEs are used for generating data by learning a probabilistic representation of the input data. They are particularly effective for tasks such as image generation and data augmentation.

- Transformers: Transformers, such as GPT (Generative Pre-trained Transformer) models, have revolutionized natural language processing. These models excel at generating coherent and contextually relevant text, making them ideal for applications in chatbots, content creation, and language translation.

Applications: Real-World Uses of Generative AI

The generative AI tech stack has a wide range of applications across various industries. Some notable examples include:

- Content Creation: Generative AI models can produce high-quality text, images, music, and videos. This capability is used in creating art, writing articles, and composing music, often with minimal human intervention.

- Healthcare: In the medical field, generative AI is used to design new drugs and predict patient outcomes. By simulating biological processes, these models can assist in discovering novel treatments and optimizing clinical trials.

- Gaming and Entertainment: Generative AI enhances gaming experiences by creating realistic characters, environments, and storylines. It also plays a role in generating unique virtual worlds and interactive experiences.

- Finance: In finance, generative AI helps in predicting market trends, detecting fraud, and automating trading strategies. Its ability to analyze vast amounts of financial data allows for more informed decision-making.

Conclusion

The generative AI tech stack is a complex but fascinating interplay of frameworks, infrastructure, models, and applications. Each layer of the stack contributes to the development and deployment of sophisticated generative AI systems. By understanding these components, businesses and developers can better harness the power of generative AI to innovate and solve real-world problems. As technology continues to advance, the generative AI tech stack will evolve, offering even more capabilities and opportunities for various industries.

Leave a comment