Creating a private language model (LLM) can provide businesses and individuals with customized, secure, and efficient AI tools. By tailoring a model to specific needs, you can achieve more relevant outputs, maintain data privacy, and have full control over the deployment. This guide will walk you through the steps of building a private LLM, focusing on making the process clear, simple, and easy to follow.

Understanding the Basics: What is a Private LLM?

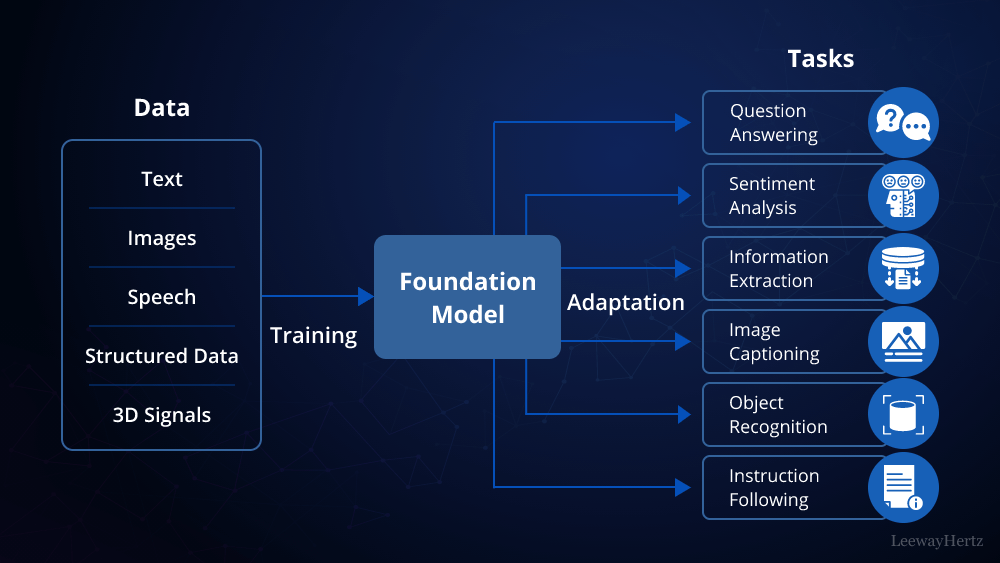

Before diving into how to build a private LLM, it’s essential to understand what it is. A private LLM is a language model that is specifically trained, hosted, and managed in a private environment, away from public or shared resources. This ensures that your data remains confidential and the model can be finely tuned to your specific requirements.

Why Build a Private LLM?

Building a private LLM offers several advantages:

- Data Security: Sensitive data never leaves your premises, ensuring compliance with data protection regulations.

- Customization: The model can be tailored to your specific needs, offering more relevant and accurate results.

- Cost Efficiency: While initial setup might be expensive, in the long run, private LLMs can be more cost-effective than paying for external services.

- Performance: You can optimize the model for your unique use cases, resulting in faster and more reliable performance.

Step 1: Define Your Requirements

The first step in building a private LLM is to define what you need from your model. Consider the following:

- Purpose: What specific tasks will the LLM perform? (e.g., customer service, content generation, data analysis)

- Data Sources: Identify the data that will be used to train the model. Ensure that this data is comprehensive, clean, and relevant.

- Scale and Performance: Determine the size of the model and the computational resources required.

Step 2: Choose the Right Model Architecture

Choosing the right model architecture is crucial in learning how to build a private LLM. There are several options available:

- GPT Variants: These models are well-suited for text generation and understanding tasks.

- BERT and its Variants: Ideal for tasks that involve understanding context and relationships within text.

- Custom Models: For highly specific needs, you might consider designing a custom model architecture.

Your choice should be guided by the task requirements, available data, and computational resources.

Step 3: Prepare Your Data

Data preparation is a critical phase when building a private LLM. Follow these steps:

- Data Collection: Gather all relevant data, ensuring it aligns with the model’s intended purpose.

- Data Cleaning: Remove duplicates, correct errors, and standardize formats to improve the quality of your dataset.

- Data Annotation: If necessary, annotate data to provide the model with context, such as tagging parts of speech or labeling entities.

Step 4: Set Up the Environment

To build a private LLM, you need a suitable environment:

- Hardware: Invest in powerful hardware, such as GPUs or TPUs, that can handle intensive computations.

- Software: Choose frameworks like TensorFlow, PyTorch, or Hugging Face’s Transformers that support LLM training.

- Cloud vs On-Premise: Decide whether to host the model on-premise for maximum control or in a private cloud environment for scalability.

Step 5: Train Your Model

Training is the most resource-intensive part of building a private LLM:

- Training Process: Split your data into training, validation, and test sets. Start with a smaller subset to validate your approach.

- Hyperparameter Tuning: Adjust parameters like learning rate, batch size, and epochs to optimize the model’s performance.

- Monitoring: Continuously monitor the training process to prevent overfitting and ensure convergence.

Step 6: Evaluate and Fine-Tune

Once the model is trained, evaluate its performance using relevant metrics such as accuracy, precision, recall, or BLEU scores for language tasks. Fine-tune the model by retraining on specific data subsets or adjusting hyperparameters to improve performance.

Step 7: Deploy and Monitor

Deploying the model involves integrating it into your applications. Consider the following:

- APIs: Use APIs to facilitate communication between your model and other applications.

- Scalability: Ensure that the infrastructure can scale to handle the anticipated load.

- Monitoring: Regularly monitor the model’s performance and update it as necessary to maintain relevance and accuracy.

Security Considerations

Security is paramount when building a private LLM. Implement strong access controls, encrypt data in transit and at rest, and regularly audit the system for vulnerabilities. Keeping your model updated with the latest security patches is also critical.

Conclusion

Building a private LLM is a complex but rewarding process that offers unparalleled control, customization, and security. By following these steps, you can create a model that perfectly fits your specific needs. As you embark on this journey, remember that careful planning, resource allocation, and ongoing maintenance are key to a successful implementation. Whether you’re a business looking to enhance customer interactions or a researcher aiming to push the boundaries of AI, a private LLM can be a powerful tool in your arsenal.

Leave a comment