Introduction

In an era where artificial intelligence (AI) is becoming integral to various industries, AI model security is a paramount concern. As businesses and organizations increasingly rely on AI models to drive decisions and automate processes, ensuring these models are secure is crucial. In this article, we’ll explore the importance of AI model security, common threats, and best practices to safeguard your AI systems.

Why AI Model Security Matters

AI model security involves protecting AI systems from malicious attacks, data breaches, and unauthorized access. Given that AI models can influence significant decisions in finance, healthcare, and other critical sectors, any compromise in their security could have serious consequences. Protecting AI models is essential to ensure their integrity, confidentiality, and availability.

Common Threats to AI Models

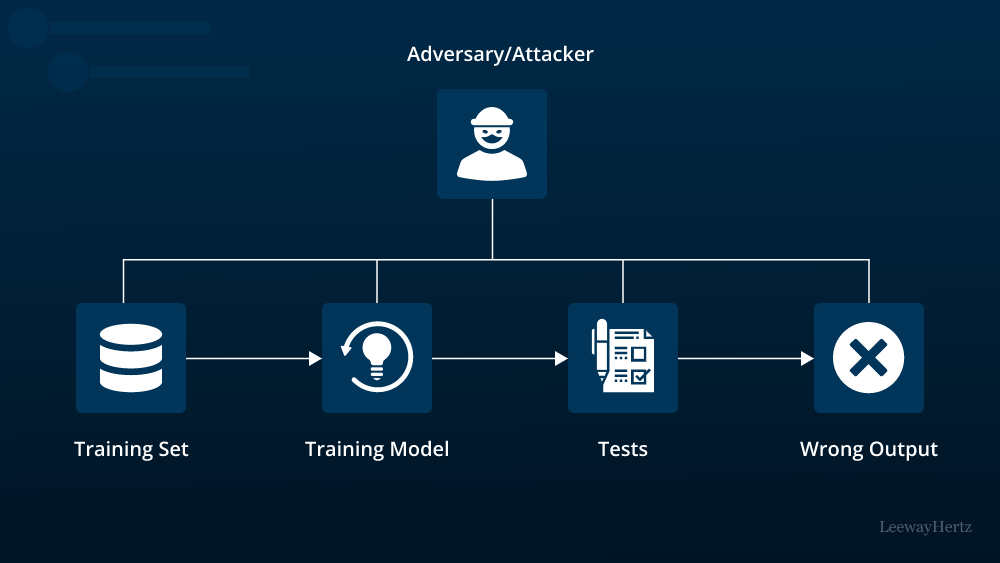

- Adversarial Attacks

Adversarial attacks involve manipulating input data to deceive AI models into making incorrect predictions or decisions. For instance, slight modifications to images or text data can cause a model to misclassify objects or understand contexts wrongly. Ensuring AI model security involves developing defenses against such attacks to maintain the model’s accuracy and reliability. - Data Poisoning

Data poisoning occurs when malicious actors introduce harmful data into the training set of an AI model. This corrupted data can skew the model’s learning process, leading to inaccurate or biased outputs. Effective AI model security requires monitoring and validating data to prevent such vulnerabilities. - Model Theft

Model theft involves unauthorized individuals copying or reverse-engineering AI models. This can lead to intellectual property loss or misuse of sensitive data. Implementing robust AI model security practices, such as encryption and access controls, helps protect against model theft. - Model Inversion

Model inversion attacks aim to reconstruct sensitive data used in training an AI model. For example, attackers might infer private information about individuals based on the model’s predictions. Ensuring AI model security involves techniques like differential privacy to protect the confidentiality of the training data.

Best Practices for AI Model Security

- Secure Data Handling

To protect AI model security, it’s crucial to handle data securely. This includes encrypting data during transmission and storage, as well as ensuring access controls are in place to prevent unauthorized data access. Regularly auditing data handling practices can also help identify potential vulnerabilities. - Robust Training Data Validation

Ensuring the quality and integrity of training data is vital for AI model security. Implementing validation procedures to detect and filter out malicious or biased data helps maintain the model’s reliability and accuracy. Data validation tools and techniques should be regularly updated to address new threats. - Regular Model Audits and Testing

Conducting regular audits and security testing of AI models can help identify and address vulnerabilities. Penetration testing, adversarial testing, and performance monitoring are essential to evaluate how well the model withstands various security threats. - Implementing Access Controls

Restricting access to AI models and their associated data is a key component of AI model security. Access controls should be implemented at various levels, including the model itself, the data it uses, and the systems that interact with it. Role-based access and multi-factor authentication can enhance security measures. - Employing Encryption Techniques

Encryption is crucial for safeguarding the data and algorithms within AI models. Using encryption techniques ensures that even if data or model parameters are intercepted, they remain protected. Regularly updating encryption protocols helps address emerging security threats. - Developing Robust Defense Mechanisms

Investing in advanced defense mechanisms, such as anomaly detection systems and adversarial training, can enhance AI model security. These mechanisms help detect unusual activities and improve the model’s resilience against various attack vectors.

Conclusion

As AI continues to evolve and integrate into various aspects of our lives, AI model security remains a critical concern. Understanding the common threats and implementing best practices for protecting AI models are essential for maintaining their integrity and trustworthiness. By focusing on secure data handling, regular audits, and robust defense mechanisms, organizations can better safeguard their AI systems and ensure they operate reliably and securely.

Ensuring AI model security is not just about defending against current threats but also about anticipating and preparing for future challenges. As the field of AI continues to advance, ongoing vigilance and adaptation will be key to maintaining strong AI model security and protecting valuable data and intellectual property.

Leave a comment