Introduction

Generative AI has revolutionized various industries by enabling machines to create text, images, music, and even videos. However, to harness the full potential of generative AI, it is crucial to fine-tune a pre-trained model for generative AI applications. This process tailors a generic model to specific tasks, enhancing its performance and ensuring it meets the unique requirements of your application. In this article, we will walk you through the steps to fine-tune a pre-trained model for generative AI applications, providing clear guidance for beginners and professionals alike.

Understanding Pre-Trained Models and Their Importance

Pre-trained models are AI models that have already been trained on large datasets and can be used as a starting point for specific tasks. These models are foundational in the world of generative AI because they save time and computational resources. Instead of training a model from scratch, which can be time-consuming and costly, you can fine-tune a pre-trained model for generative AI applications to achieve better results more efficiently.

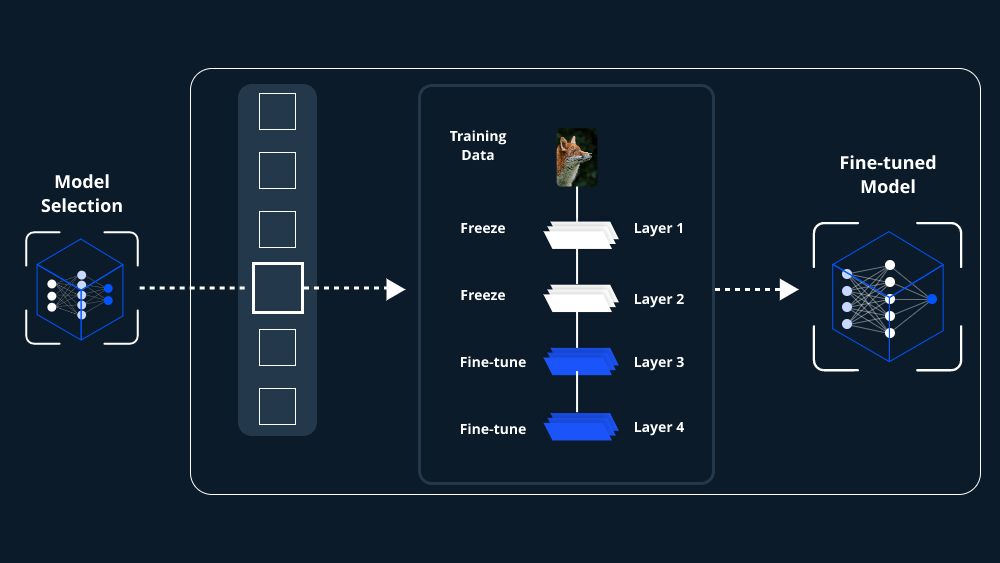

Step 1: Choose the Right Pre-Trained Model

The first step to fine-tune a pre-trained model for generative AI applications is selecting the appropriate model. The choice depends on your specific application—whether it’s text generation, image synthesis, or another generative task. Popular pre-trained models include GPT (Generative Pre-trained Transformer) for text, StyleGAN for image generation, and Jukedeck for music creation. Ensure that the model you choose aligns with your application’s requirements and has been trained on relevant data.

Step 2: Prepare Your Dataset

Once you’ve selected a pre-trained model, the next step is to prepare your dataset. This is a critical phase in fine-tuning a pre-trained model for generative AI applications. Your dataset should be clean, well-organized, and representative of the task at hand. For example, if you’re fine-tuning a model for text generation in a specific domain, your dataset should include text from that domain. Data augmentation techniques can also be applied to increase the diversity and volume of your training data, further enhancing the model’s performance.

Step 3: Set Up the Fine-Tuning Environment

To fine-tune a pre-trained model for generative AI applications, you need to set up an environment that supports the process. This includes having access to the necessary hardware, such as GPUs or TPUs, and software tools, such as TensorFlow or PyTorch. Most pre-trained models are available in popular machine learning libraries, making it easier to implement them in your environment. Additionally, ensure that you have the technical know-how to manage the fine-tuning process, including knowledge of Python, data preprocessing, and model training.

Step 4: Adjust the Hyperparameters

Fine-tuning a pre-trained model for generative AI applications involves tweaking various hyperparameters to optimize performance. Hyperparameters include learning rate, batch size, and the number of training epochs. The learning rate determines how quickly the model updates its parameters during training, while the batch size affects the number of samples processed before updating the model. The number of epochs refers to how many times the model will go through the entire training dataset. Finding the right balance for these parameters is essential for achieving the best results.

Step 5: Begin Fine-Tuning

With everything in place, you can now begin the fine-tuning process. Start by training the model on your prepared dataset. During this phase, the model’s weights are adjusted to better fit the specific task you want to perform. It’s essential to monitor the model’s performance during training to ensure it is learning correctly and not overfitting the data. Overfitting occurs when the model becomes too specialized on the training data and performs poorly on new, unseen data. Use validation techniques, such as cross-validation, to assess the model’s performance throughout the training process.

Step 6: Evaluate the Fine-Tuned Model

After fine-tuning, it’s time to evaluate the model’s performance. This step is crucial to ensure that the model can effectively perform the task it was fine-tuned for. Evaluate the model using metrics relevant to your application, such as BLEU score for text generation, FID score for image generation, or any other appropriate metric. If the model’s performance is not satisfactory, you may need to revisit the previous steps and adjust the hyperparameters or use a different dataset.

Step 7: Deploy the Fine-Tuned Model

Once you are satisfied with the model’s performance, the final step is deployment. Deploying a fine-tuned model for generative AI applications involves integrating it into your application so it can start generating outputs. Depending on your use case, this might involve deploying the model to a cloud platform, embedding it into a web application, or using it in a standalone software solution. Ensure that the deployment process includes monitoring capabilities to track the model’s performance in real-time and make adjustments if necessary.

Conclusion

Fine-tuning a pre-trained model for generative AI applications is a powerful approach to creating high-quality, task-specific AI solutions. By carefully selecting a model, preparing the dataset, adjusting hyperparameters, and evaluating the results, you can develop a generative AI application that meets your unique needs. As generative AI continues to evolve, mastering the art of fine-tuning will become increasingly valuable, enabling you to create innovative and effective AI-driven solutions.

Leave a comment