Introduction to the Generative AI Tech Stack

In the rapidly evolving landscape of artificial intelligence, the Generative AI tech stack stands out as a key player driving innovation. This tech stack encompasses the tools, technologies, and frameworks that enable the development of generative models—AI systems capable of creating new content, such as text, images, or even music. In this article, we’ll explore the essential components of the Generative AI tech stack, how they work together, and their applications.

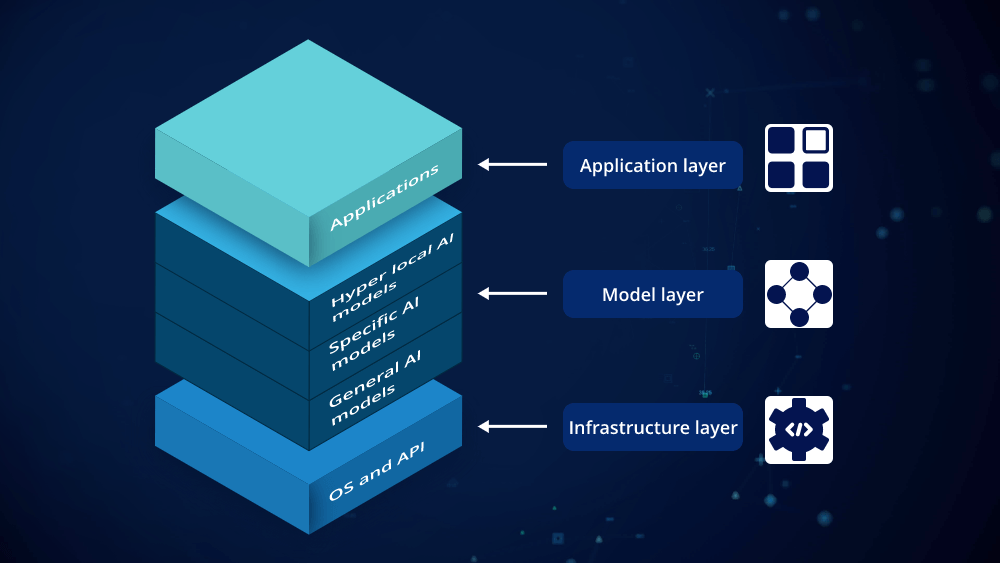

Core Components of the Generative AI Tech Stack

- Data Collection and Management At the foundation of any Generative AI tech stack is data. High-quality, diverse datasets are crucial for training generative models. This involves collecting large volumes of data relevant to the task at hand, whether it’s text for natural language generation or images for visual content creation. Data management tools and frameworks, such as data lakes and data warehouses, play a pivotal role in organizing and preprocessing this data for effective model training.

- Machine Learning Frameworks Machine learning frameworks are the backbone of the Generative AI tech stack. These frameworks provide the infrastructure needed to build, train, and evaluate generative models. Popular frameworks include TensorFlow, PyTorch, and JAX. Each of these frameworks offers different features and optimizations, allowing developers to choose the one that best fits their needs. TensorFlow is known for its scalability and production readiness, PyTorch for its dynamic computation graph and ease of use, and JAX for its high-performance numerical computing capabilities.

- Generative Models Generative models are the heart of the Generative AI tech stack. These models can be broadly classified into several categories, including:

- Generative Adversarial Networks (GANs): GANs consist of two neural networks, a generator and a discriminator, that work in opposition. The generator creates new data instances, while the discriminator evaluates them against real data. GANs are widely used for generating realistic images and videos.

- Variational Autoencoders (VAEs): VAEs are used for generating new data instances that are similar to the training data. They work by encoding data into a latent space and then decoding it to generate new samples. VAEs are often used for tasks such as image generation and denoising.

- Transformers: Transformers have revolutionized natural language processing (NLP) and are now being applied to other domains as well. Models like GPT-3 and BERT are based on transformer architecture and are used for text generation, translation, and more.

- Training and Optimization Tools Training generative models requires significant computational resources. Tools for distributed training, such as Kubernetes and Apache Spark, help manage and scale these resources. Additionally, optimization techniques like hyperparameter tuning and regularization are essential for improving model performance. Libraries such as Optuna and Hyperopt assist in automating the process of finding the best hyperparameters.

- Deployment and Integration Once a generative model is trained, it needs to be deployed and integrated into applications. This is where deployment platforms like Docker and cloud services such as AWS, Google Cloud, and Azure come into play. These platforms provide the infrastructure needed to run models in production environments and ensure they are scalable and reliable. APIs and SDKs are also crucial for integrating generative models into existing applications and workflows.

- Ethics and Governance As with any AI technology, ethical considerations and governance are critical components of the Generative AI tech stack. Issues such as bias in training data, the potential for misuse, and ensuring transparency are important factors to address. Tools and frameworks for monitoring and auditing AI systems can help mitigate these concerns and ensure responsible use of generative technology.

Applications of the Generative AI Tech Stack

The Generative AI tech stack has a wide range of applications across various industries. Here are some notable examples:

- Content Creation: Generative models can create text, images, and videos for marketing, entertainment, and journalism. For instance, GPT-3 can generate coherent and contextually relevant text, while GANs can produce realistic images and deepfakes.

- Healthcare: In healthcare, generative models are used to create synthetic medical data for research, generate personalized treatment plans, and even assist in drug discovery.

- Finance: In the finance sector, generative models can simulate market conditions, generate financial forecasts, and enhance algorithmic trading strategies.

- Gaming: The gaming industry uses generative AI to create realistic game environments, characters, and storylines, providing players with immersive experiences.

Conclusion

The Generative AI tech stack represents a powerful set of tools and technologies that enable the creation of innovative and diverse content. From data management and machine learning frameworks to generative models and deployment platforms, each component plays a crucial role in the development and application of generative AI. As technology continues to advance, the Generative AI tech stack will likely evolve, bringing new possibilities and challenges. Understanding these components and their interactions is key to harnessing the full potential of generative AI in various domains.

Leave a comment