As the capabilities of Large Language Models (LLMs) continue to advance, more organizations and individuals are interested in creating their own private versions of these powerful tools. Building a private LLM can provide tailored performance and enhanced data privacy. This article will guide you through the essential steps to build a private LLM, covering the necessary resources, setup, and best practices.

Introduction to LLMs

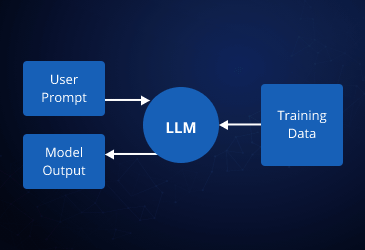

Large Language Models are AI systems trained on vast amounts of text data to understand and generate human language. They have a wide range of applications, from chatbots and content generation to research and data analysis. While public LLMs like GPT-4 are widely available, there are significant advantages to creating a private LLM, including customization to specific needs and control over data privacy.

Step-by-Step Guide to Building a Private LLM

1. Define Your Objectives

Before diving into the technical details, it’s crucial to define the purpose of your private LLM. Consider the following questions:

- What specific tasks will the LLM perform?

- What data will it need to process?

- Are there any particular privacy or security requirements?

Having clear objectives will help in selecting the right model architecture and training data.

2. Choose the Right Model Architecture

Selecting an appropriate model architecture is critical. Some popular LLM architectures include:

- GPT (Generative Pre-trained Transformer): Known for its strong generative capabilities.

- BERT (Bidirectional Encoder Representations from Transformers): Ideal for understanding context and performing tasks like question-answering.

- T5 (Text-To-Text Transfer Transformer): Flexible and can handle a variety of tasks by treating them all as text-to-text transformations.

3. Gather and Prepare Data

The quality and quantity of training data directly impact the performance of your LLM. Data preparation involves:

- Data Collection: Gather text data relevant to your domain. This can include articles, books, websites, and proprietary documents.

- Data Cleaning: Remove irrelevant content, correct errors, and format the data consistently.

- Data Augmentation: Enhance the dataset with synthetic data or by augmenting existing data (e.g., paraphrasing sentences).

4. Preprocessing Data

Preprocessing converts raw text into a format suitable for training. Common preprocessing steps include:

- Tokenization: Splitting text into tokens (words, subwords, or characters).

- Normalization: Converting text to lowercase, removing punctuation, and handling special characters.

- Encoding: Converting tokens into numerical representations that the model can process.

5. Set Up the Training Environment

Training a large language model requires substantial computational resources. Options include:

- On-premise Servers: Dedicated servers with GPUs can be costly but offer complete control.

- Cloud Services: Providers like AWS, Google Cloud, and Azure offer scalable GPU instances, which can be more cost-effective and easier to manage.

Ensure you have the necessary software installed, including deep learning frameworks like TensorFlow or PyTorch.

6. Train the Model

Training an LLM is computationally intensive and time-consuming. Key steps include:

- Model Initialization: Choose appropriate initial weights (pre-trained models can speed up training).

- Training Process: Use backpropagation and gradient descent to adjust the model weights based on the training data. Monitor the process to ensure the model is learning effectively.

- Validation: Periodically evaluate the model on a validation set to check its performance and avoid overfitting.

7. Fine-Tuning

After the initial training, fine-tuning adjusts the model for specific tasks or datasets. This involves:

- Task-Specific Tuning: Adapting the model to perform specific tasks like translation, summarization, or sentiment analysis.

- Domain-Specific Tuning: Refining the model to understand and generate text specific to your industry or field.

8. Evaluate and Test

Thorough evaluation is essential to ensure your LLM performs well. Consider the following:

- Performance Metrics: Use metrics like perplexity, BLEU score, and F1 score to measure the model’s performance.

- User Testing: Have potential users interact with the model to provide feedback on its real-world performance.

9. Deployment

Once the model is trained and fine-tuned, it’s ready for deployment. Steps include:

- Integration: Incorporate the model into your applications or services.

- Scalability: Ensure the system can handle the expected load, possibly using containerization tools like Docker and orchestration platforms like Kubernetes.

- Monitoring: Continuously monitor the model’s performance and update it as needed.

10. Maintain and Update

LLMs require ongoing maintenance to stay relevant and perform well. Regularly update the model with new data and improvements based on user feedback and changing requirements.

Conclusion

Building a private LLM involves a series of well-defined steps, from setting clear objectives and gathering data to training, fine-tuning, and deploying the model. With the right approach and resources, you can create a powerful language model tailored to your specific needs while ensuring data privacy and control. Whether for business applications or research, a private LLM can significantly enhance your capabilities in natural language processing.

Leave a comment