In today’s data-driven world, large language models (LLMs) have become integral to various industries, from customer service chatbots to content generation. However, concerns about data privacy and security have led many organizations to seek alternatives to using publicly available LLMs. Building a private LLM can offer the benefits of state-of-the-art natural language processing while ensuring the confidentiality of sensitive information. In this guide, we’ll walk you through the steps to build your own private LLM.

Understanding the Need for a Private LLM

Publicly available LLMs have undoubtedly revolutionized many aspects of AI-driven applications. However, for organizations dealing with sensitive data such as medical records, financial transactions, or proprietary information, using a public LLM raises significant privacy concerns. By building a private LLM, organizations can maintain control over their data and ensure compliance with regulations such as GDPR and HIPAA.

Leveraging Open-Source Frameworks

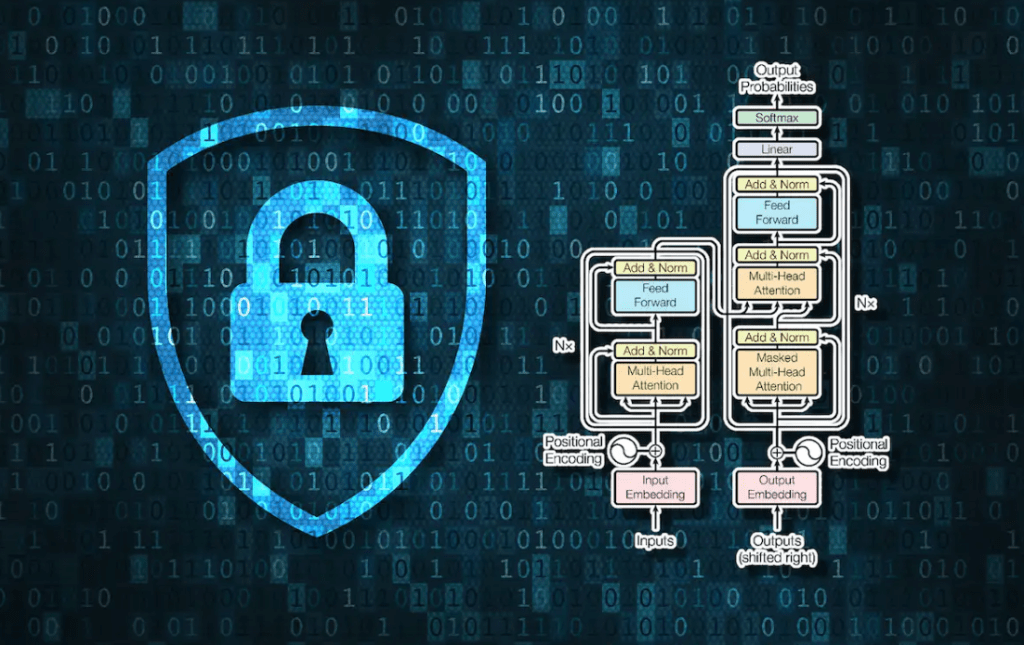

Building a private LLM from scratch can be a daunting task. Fortunately, there are several open-source frameworks available that provide a solid foundation for developing custom LLMs. One such framework is Hugging Face’s Transformers library, which offers pre-trained models and tools for fine-tuning them on specific datasets. By leveraging these frameworks, developers can significantly reduce the time and effort required to build a private LLM.

Collecting and Preprocessing Data

The quality of the data used to train an LLM has a significant impact on its performance. When building a private LLM, it’s essential to collect a diverse range of high-quality data relevant to the domain in which the model will be deployed. This may involve gathering data from various sources such as internal documents, customer interactions, or publicly available datasets. Once the data is collected, it must be preprocessed to remove noise, handle missing values, and ensure consistency.

Fine-Tuning the Model

Fine-tuning is the process of retraining a pre-trained LLM on a specific dataset to adapt it to a particular task or domain. When building a private LLM, fine-tuning plays a crucial role in ensuring that the model performs well on the target task while preserving the privacy of the underlying data. Developers can fine-tune pre-trained models using techniques such as transfer learning, where knowledge gained from training on a large dataset is transferred to a smaller, domain-specific dataset.

Evaluating Performance and Iterating

Once the private LLM has been trained and fine-tuned, it’s essential to evaluate its performance thoroughly. This involves testing the model on a separate validation dataset to measure metrics such as accuracy, precision, recall, and F1 score. Based on the evaluation results, developers can identify areas where the model performs well and areas where it may need improvement. Iterative refinement is key to building a high-quality private LLM that meets the needs of its intended application.

Ensuring Data Privacy and Security

One of the primary advantages of building a private LLM is the ability to maintain control over sensitive data. However, ensuring data privacy and security requires careful consideration at every stage of the development process. This includes implementing encryption and access controls to protect data both during training and inference, as well as regularly auditing and updating security measures to address emerging threats.

Conclusion

Building a private LLM offers organizations the opportunity to harness the power of natural language processing while maintaining control over their data. By leveraging open-source frameworks, collecting and preprocessing high-quality data, fine-tuning the model, and prioritizing data privacy and security, developers can create custom LLMs tailored to their specific needs. While the process may be challenging, the benefits of a private LLM in terms of data privacy, security, and performance make it a worthwhile investment for organizations operating in sensitive domains.

Leave a comment